APIs give you direct but limited access to the data that you want from a software but sometimes you might find yourself in a scenario where there might not be an API to access the data you want, or the access to the API might be too limited or expensive.In these scenarios, web scraping would allow you to access the data as long as it is available on a website. Web scraping allows you to extract data from any website through the use of web scraping software.

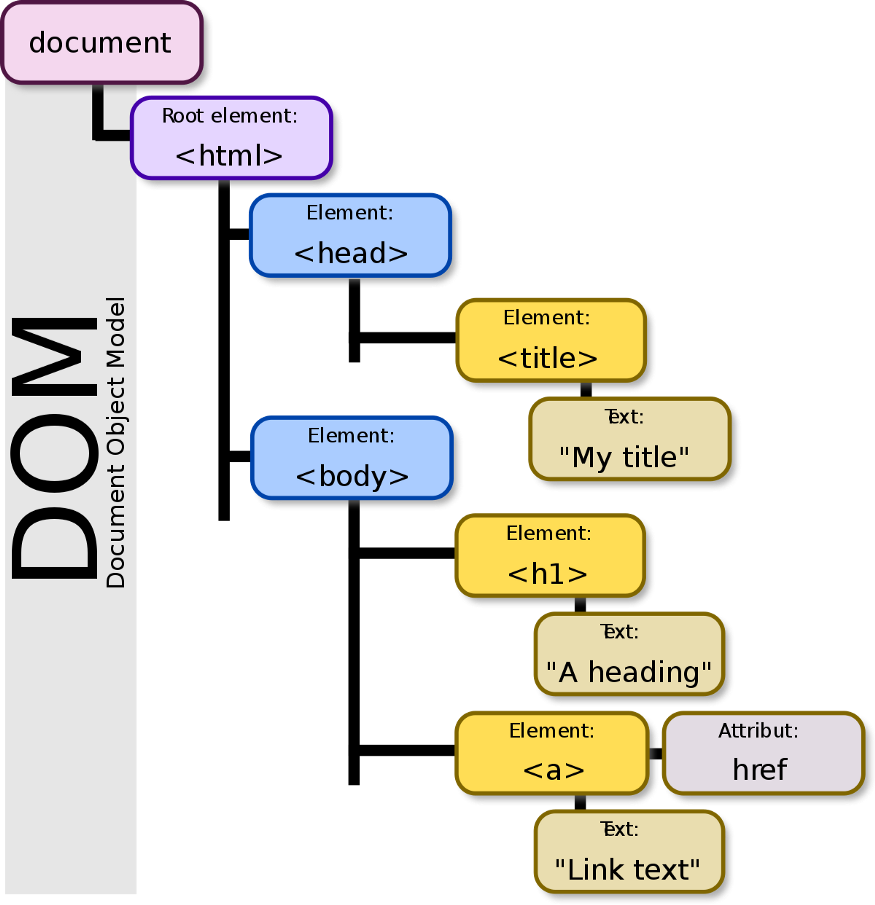

Before you do web scraping you should know something called as DOM Document Object Model (DOM) which is a cross-platform and language-independent interface that treats an XML or HTML document as a tree structure wherein each node is an object representing a part of the document. The DOM represents a document with a logical tree.

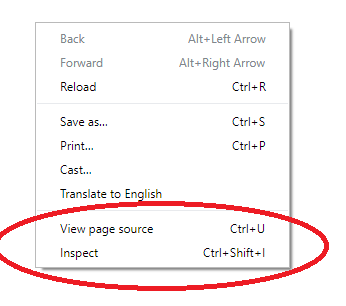

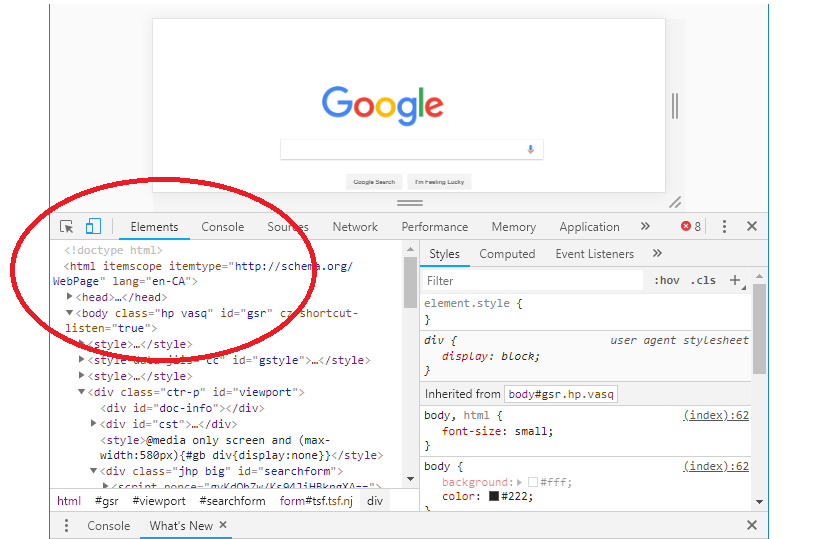

All you need to do is visit any web page right click and select inspect or use ctrl + shift + i and it will show you the HTML that makes up the page.

You can search the DOM Tree by string, CSS selector, or XPath selector or You can edit the DOM on the fly and see how those changes affect the page. All the changes you make will be temporary in nature so do not worry about messing any code enjoy your learning experience.

Playing around the DOM helps you to locate the data that you want to extract from a web page that’s where web-scraping comes into the picture. There are many Python libraries available to perform web scraping the point is identifying which one will be more useful and is quick in providing the data you want and the way you want.

Python has libraries like Requests-HTML, lxml , Beautiful soup or a web scraping frame work Scrapy to provide you the data you want. Remember when it comes to a data scientist or a data analyst there is no control on the greed for more data every time you get into pulling the data you would always wish you had more and some other library is better than the previous one. Lets me just give my simple understanding about Requests-HTML, Beautiful soup & Scrapy and leave the rest up-to you to decide 🙂

Requests-HTML

The most basic Python library for web scraping is‘Requests-HTML’ lets us make HTML requests to the website’s server for retrieving the data on its page which helps you getting the HTML content of the web page and its suggested to use this library when you simply want to communicate with the websites. Its quite useful when you have to collect the links or the posts or specific element from the CSS section. It is very useful for simple and non recurring web scraping tasks and a to go to library for HTTP requests

Beautiful soup

Beautiful soup is library designed for quick scraping projects. It allows you to select and navigate the tree-like structure of HTML documents, searching for particular tags, attributes or ids. It also allows you to then further traverse the HTML documents through relations like children or siblings. In other words, with Beautiful Soup, you could first select a specific div tag and then search through all of its nested tags. Beautiful Soup as a library is popular because it is is easier to work with and well suited for beginners and easier to learn.It again very useful for simple and non recurring web scraping tasks and a to go to library for Data Parsing

Scrapy

Scrapy is not just a library but a complete framework for web scraping having a large ecosystem of developers contributing projects and support on Github & Stack Overflow. When it comes to recurring or large scale web scraping need all the heavy lifting that is required is smoothly done by Scrapy. It can get you multiple HTTP requests at the same time or create pipelines or extract data from dynamic websites. when you are just beginning to learn just remember it has a steep learning curve and you go to Scrapy for complete web scraping solution

Coming back to the greed for data the idea is never about collecting the data its about how you structure it and use it

Thats All folks for the Day — See you on the other side of the break 🙂 Happy Learning!!

Would love to incessantly get updated outstanding web blog! .

certainly like your web-site but you have to check the spelling on several of your posts. A number of them are rife with spelling problems and I in finding it very bothersome to inform the reality on the other hand I will certainly come again again.

Keep up the fantastic piece of work, I read few blog posts on this web site and I think that your weblog is really interesting and has got lots of great information.

I have been exploring for a little for any high quality articles or blog posts on this sort of space . Exploring in Yahoo I at last stumbled upon this website. Studying this info So i?¦m glad to exhibit that I’ve an incredibly good uncanny feeling I found out just what I needed. I so much indubitably will make certain to don?¦t omit this website and give it a glance regularly.

I like what you guys are up too. Such smart work and reporting! Carry on the superb works guys I’ve incorporated you guys to my blogroll. I think it’ll improve the value of my website 🙂

[url=http://happyfamilypharmacy24.online/]online pharmacy pain medicine[/url]

где купить справку

Thanks for the marvelous posting! I really enjoyed reading it, you might be a great author. I will always bookmark your blog and definitely will come back at some point. I want to encourage one to continue your great writing, have a nice holiday weekend!

Please let me know if you’re looking for a article author for your site. You have some really great posts and I believe I would be a good asset. If you ever want to take some of the load off, I’d really like to write some articles for your blog in exchange for a link back to mine. Please send me an e-mail if interested. Kudos!

We are a group of volunteers and starting a new scheme in our community. Your site provided us with helpful information to work on. You have performed an impressive process and our whole group can be grateful to you.

If you desire to improve your familiarity simply keep visiting this site and be updated with the most recent gossip posted here.

[url=http://hydroxychloroquine.skin/]quineprox 30[/url]

I’m not sure where you are getting your info, but good topic. I needs to spend some time learning more or understanding more. Thanks for great information I was looking for this information for my mission.

Very good blog! Do you have any helpful hints for aspiring writers? I’m planning to start my own site soon but I’m a little lost on everything. Would you advise starting with a free platform like WordPress or go for a paid option? There are so many choices out there that I’m totally confused .. Any ideas? Thanks a lot!

[url=http://prednisolonetab.skin/]prednisolone 10 mg daily[/url]

What’s up friends, how is all, and what you want to say about this piece of writing, in my view its actually awesome in favor of me.

[url=https://erectafil.foundation/]erectafil 5[/url]

Hi there! This post couldn’t be written any better! Reading through this post reminds me of my previous room mate! He always kept chatting about this. I will forward this write-up to him. Fairly certain he will have a good read. Thanks for sharing!

Great post.

An interesting discussion is worth comment. I believe that you ought to write more on this subject, it might not be a taboo subject but generally people do not speak about such subjects. To the next! Many thanks!!

Hi, Neat post. There is a problem with your site in internet explorer, could check this? IE still is the marketplace leader and a big part of other folks will leave out your magnificent writing due to this problem.

Hi! I just wanted to ask if you ever have any trouble with hackers? My last blog (wordpress) was hacked and I ended up losing months of hard work due to no data backup. Do you have any solutions to prevent hackers?

I think the admin of this website is really working hard for his site, because here every information is quality based information.

Great article, exactly what I needed.

Hi, i think that i saw you visited my blog so i got here to go back the want?.I am trying to in finding things to improve my site!I assume its good enough to use some of your concepts!!

Pretty section of content. I just stumbled upon your website and in accession capital to assert that I acquire in fact enjoyed account your blog posts. Any way I’ll be subscribing to your augment and even I achievement you access consistently rapidly.

[url=http://lyricalm.online/]lyrica drug cost[/url]

Hey I know this is off topic but I was wondering if you knew of any widgets I could add to my blog that automatically tweet my newest twitter updates. I’ve been looking for a plug-in like this for quite some time and was hoping maybe you would have some experience with something like this. Please let me know if you run into anything. I truly enjoy reading your blog and I look forward to your new updates.

This article offers clear idea for the new users of blogging, that actually how to do blogging.

Undeniably believe that which you stated. Your favorite justification appeared to be on the internet the simplest thing to be aware of. I say to you, I definitely get irked while people consider worries that they plainly do not know about. You managed to hit the nail upon the top and also defined out the whole thing without having side effect , people can take a signal. Will likely be back to get more. Thanks

Excellent web site you’ve got here.. It’s hard to find high-quality writing like yours these days. I truly appreciate people like you! Take care!!

If some one wants to be updated with most up-to-date technologies afterward he must be go to see this web site and be up to date daily.

Right now it looks like BlogEngine is the best blogging platform out there right now. (from what I’ve read) Is that what you’re using on your blog?

[url=https://strattera.foundation/]cheap strattera[/url]

Hey There. I found your blog the use of msn. This is a very well written article. I will be sure to bookmark it and come back to read more of your useful information. Thank you for the post. I will definitely comeback.

[url=http://amoxicillinhe.online/]augmentin 1500[/url]

Hey There. I found your blog using msn. This is a very well written article. I will be sure to bookmark it and come back to read more of your useful information. Thank you for the post. I will definitely comeback.

Hi there to every one, it’s in fact a good for me to pay a visit this site, it consists of priceless Information.

My spouse and I stumbled over here by a different website and thought I might as well check things out. I like what I see so i am just following you. Look forward to looking over your web page for a second time.

A fascinating discussion is worth comment. I do believe that you should write more on this subject, it might not be a taboo subject but generally people do not speak about such topics. To the next! Cheers!!

Sweet blog! I found it while surfing around on Yahoo News. Do you have any tips on how to get listed in Yahoo News? I’ve been trying for a while but I never seem to get there! Cheers

Hello would you mind stating which blog platform you’re working with? I’m planning to start my own blog in the near future but I’m having a difficult time making a decision between BlogEngine/Wordpress/B2evolution and Drupal. The reason I ask is because your design seems different then most blogs and I’m looking for something completely unique. P.S My apologies for getting off-topic but I had to ask!

Aw, this was an incredibly nice post. Finding the time and actual effort to make a really good article but what can I say I procrastinate a lot and never seem to get anything done.

Thank you a bunch for sharing this with all people you really realize what you are talking approximately! Bookmarked. Please also talk over with my site =). We will have a link exchange agreement among us

Thanks designed for sharing such a nice idea, post is good, thats why i have read it fully

An impressive share! I have just forwarded this onto a coworker who had been doing a little research on this. And he in fact bought me lunch because I discovered it for him… lol. So let me reword this…. Thank YOU for the meal!! But yeah, thanx for spending the time to discuss this issue here on your web site.

It’s a shame you don’t have a donate button! I’d without a doubt donate to this brilliant blog! I suppose for now i’ll settle for book-marking and adding your RSS feed to my Google account. I look forward to brand new updates and will talk about this site with my Facebook group. Chat soon!

I loved as much as you will receive carried out right here. The sketch is tasteful, your authored subject matter stylish. nonetheless, you command get bought an impatience over that you wish be delivering the following. unwell unquestionably come further formerly again since exactly the same nearly a lot often inside case you shield this increase.

What’s up, always i used to check webpage posts here early in the break of day, as i like to gain knowledge of more and more.

[url=http://hydroxyzine.science/]over the counter atarax[/url]

[url=https://hydroxyzine.party/]atarax 10 mg tablet[/url]

[url=https://robaxin.party/]robaxin 500 mg tablet price[/url]

No matter if some one searches for his essential thing, so he/she wants to be available that in detail, thus that thing is maintained over here.

[url=http://lipitor.charity/]atorvastatin lipitor[/url]

[url=https://onlinedrugstore.science/]top online pharmacy 247[/url]

[url=https://pharmacyonline.science/]mexico pharmacy order online[/url]

[url=http://levofloxacin.science/]levofloxacin[/url]

[url=http://prazosin.gives/]prazosin hydrochloride[/url]

[url=http://baclofen.party/]medicine baclofen 10 mg[/url]

[url=http://azithromycin.solutions/]where to get zithromax over the counter[/url]

[url=http://robaxin.party/]methocarbamol robaxin 500mg[/url]

[url=https://pharmacyonline.science/]canadian pharmacy coupon[/url]

hey there and thank you for your information I’ve definitely picked up anything new from right here. I did however expertise some technical issues using this web site, since I experienced to reload the web site a lot of times previous to I could get it to load properly. I had been wondering if your web hosting is OK? Not that I am complaining, but sluggish loading instances times will very frequently affect your placement in google and can damage your quality score if advertising and marketing with Adwords. Anyway I’m adding this RSS to my e-mail and can look out for a lot more of your respective fascinating content. Make sure you update this again soon.

Howdy are using WordPress for your blog platform? I’m new to the blog world but I’m trying to get started and create my own. Do you need any coding knowledge to make your own blog? Any help would be greatly appreciated!

Грамотный частный эромассаж Москва с джакузи

[url=https://cialisonlinedrugstore.charity/]tadalafil soft gel capsule[/url]

[url=https://triamterene.charity/]triamterene hctz 37.5-25 mg[/url]

[url=http://hydroxyzine.party/]atarax for eczema[/url]

[url=https://prednisolone.solutions/]buy prednisolone 5mg online uk[/url]

[url=https://metformin.party/]metformin over the counter uk[/url]

[url=https://pharmacyonline.science/]reliable rx pharmacy[/url]

[url=https://hydroxyzine.party/]atarax 10mg otc[/url]

[url=http://pharmacyonline.science/]tops pharmacy[/url]

Hurrah! After all I got a blog from where I know how to in fact get helpful information regarding my study and knowledge.

[url=https://triamterene.charity/]triamterene-hctz 75-50 mg tab[/url]

[url=https://nolvadextn.online/]nolvadex tablets price[/url]

[url=https://celecoxib.party/]celebrex gel[/url]

[url=https://celecoxib.party/]celebrex 200mg generic[/url]

[url=https://happyfamilyonlinepharmacy.net/]secure medical online pharmacy[/url]

[url=https://trimox.science/]augmentin 750 mg tablet[/url]

[url=https://difluca.com/]buy fluconazol without prescription[/url]

[url=https://finasteride.skin/]propecia online without prescription[/url]

[url=http://acutanetab.online/]accutane tablets[/url]

[url=https://suhagra.party/]suhagra 100mg online india[/url]

[url=https://finasteride.skin/]finasteride hair[/url]

[url=http://lisinopril.party/]lisinopril 10 mg online no prescription[/url]

[url=http://albuterolx.com/]ventolin 90 mg[/url]

[url=https://celecoxib.party/]drug celebrex[/url]

If some one wants to be updated with most recent technologies after that he must be visit this site and be up to date daily.

[url=https://diflucanfns.online/]over the counter diflucan cream[/url]

[url=http://suhagra.party/]suhagra 100 canada[/url]

[url=https://lyrjca.online/]lyrica price in mexico[/url]

Hello there, I do think your website might be having internet browser compatibility issues. When I look at your website in Safari, it looks fine however when opening in Internet Explorer, it has some overlapping issues. I just wanted to give you a quick heads up! Besides that, great blog!

[url=https://acutanetab.online/]best generic accutane[/url]

[url=https://happyfamilyonlinepharmacy.net/]canadian mail order pharmacy[/url]

[url=http://phenergan.download/]online phenergan[/url]

[url=http://diflucanfns.online/]diflucan 150mg fluconazole[/url]

[url=http://happyfamilyonlinepharmacy.net/]online pharmacy europe[/url]

[url=https://bactrim.download/]where can i get bactrim[/url]

Hurrah! Finally I got a website from where I know how to in fact take helpful data regarding my study and knowledge.

[url=http://atomoxetine.pics/]buy strattera online uk[/url]

[url=http://atomoxetine.pics/]strattera 10 mg cap[/url]

[url=http://retinoa.skin/]retino[/url]

Thanks for sharing your thoughts on %meta_keyword%. Regards

[url=https://finasteride.skin/]where to buy finasteride online[/url]

[url=http://hydroxychloroquine.best/]plaquenil nz[/url]

[url=http://synteroid.online/]synthroid price comparison[/url]

[url=http://happyfamilyonlinepharmacy.net/]legit online pharmacy[/url]

[url=http://finasteride.skin/]propecia[/url]

[url=https://celecoxib.party/]celibrax[/url]

I haven?¦t checked in here for some time because I thought it was getting boring, but the last several posts are great quality so I guess I will add you back to my daily bloglist. You deserve it my friend 🙂

[url=http://happyfamilyonlinepharmacy.net/]canadian pharmacy no prescription[/url]

For most up-to-date news you have to pay a visit web and on world-wide-web I found this web site as a best web site for latest updates.

[url=https://ventolin.science/]where to buy albuterol canada[/url]

[url=http://ventolin.science/]combivent cost[/url]

[url=https://silagra.trade/]buy silagra online[/url]

Hello there! I know this is kinda off topic nevertheless I’d figured I’d ask. Would you be interested in exchanging links or maybe guest writing a blog article or vice-versa? My site goes over a lot of the same subjects as yours and I believe we could greatly benefit from each other. If you are interested feel free to send me an e-mail. I look forward to hearing from you! Superb blog by the way!

[url=http://silagra.science/]silagra 50 mg price[/url]

[url=http://silagra.trade/]buy silagra online uk[/url]

[url=http://gabapentin.science/]gabapentin cost canada[/url]

[url=http://zithromaxc.online/]azithromycin brand name[/url]

[url=https://promethazine.science/]generic phenergan[/url]

[url=http://motrin.science/]motrin 3 pills[/url]

[url=https://amoxicillin.run/]amoxicillin 875 mg[/url]

[url=https://amoxicillin.run/]where to buy amoxil[/url]

[url=http://baclofentm.online/]baclofen tablet[/url]

[url=http://modafiniln.com/]modafinil prescription online[/url]

[url=http://promethazine.science/]phenergan 25mg uk[/url]

[url=http://valtrexm.com/]where can i get valtrex[/url]

[url=https://happyfamilyrxstorecanada.online/]online pharmacy for sale[/url]

[url=http://netformin.com/]buy metformin from india[/url]

Oh my goodness! Awesome article dude! Thank you, However I am experiencing difficulties with your RSS. I don’t know why I am unable to subscribe to it. Is there anybody else getting the same RSS problems? Anyone who knows the solution will you kindly respond? Thanx!!

[url=https://fluoxetine.science/]fluoxetine online uk[/url]

[url=http://baclofentm.online/]baclofen otc uk[/url]

[url=https://fluoxetine.science/]prozac generic cost canada[/url]

[url=http://fluoxetine.science/]fluoxetine 20mg online[/url]

[url=http://modafiniln.com/]cheap modafinil uk[/url]

[url=http://isotretinoin.science/]generic accutane[/url]

[url=https://antabuse.science/]antabuse no prescription[/url]

[url=http://synthroid.beauty/]synthroid 125 pill[/url]

[url=https://gabapentin.science/]neurontin 100 mg[/url]

[url=https://paxil.party/]paxil price[/url]

[url=https://happyfamilyrxstorecanada.online/]onlinepharmaciescanada com[/url]

[url=https://fluoxetine.science/]fluoxetine online pharmacy[/url]

[url=http://amoxicillin.run/]augmentin 325 mg[/url]

[url=http://celecoxib.science/]how to order celebrex online[/url]

[url=https://motrin.science/]motrin 600 over the counter[/url]

[url=https://finasteride.media/]propecia india online[/url]

[url=http://synthroid.beauty/]synthroid tab 88mcg[/url]

[url=https://synthroid.beauty/]synthroid 0.5[/url]

[url=http://duloxetine.science/]cymbalta over the counter[/url]

[url=http://celecoxib.science/]celebrex script[/url]

[url=http://albendazole.science/]albendazole how to[/url]

[url=http://dexamethasoner.online/]dexamethasone 500mcg[/url]

[url=https://netformin.com/]metformin 500 mg for sale[/url]

[url=https://gabapentin.science/]4000mg gabapentin[/url]

[url=http://duloxetine.science/]cymbalta prescription coupon[/url]

[url=https://fluoxetine.science/]prozac 120 mg[/url]

[url=https://flomaxp.com/]flomax medicine cost[/url]

[url=https://ventolin.science/]cheap allbuterol[/url]

[url=http://silagra.trade/]silagra 100[/url]

[url=https://valtrexm.com/]buy valtrex usa[/url]

[url=http://diclofenac.party/]diclofenac over the counter[/url]

[url=https://diclofenac.party/]diclofenac gel 100g[/url]

[url=http://lasixmb.online/]buy furosemide 20 mg[/url]

[url=http://diclofenac.party/]voltaren 180g[/url]

[url=https://fluconazole.party/]over the counter diflucan pill[/url]

[url=https://albuterol.africa/]ventolin best price[/url]

[url=http://prednisolone.media/]prednisolone tablets 25mg price[/url]

[url=http://toradol.download/]toradol gel[/url]

[url=https://escitalopram.party/]best price for lexapro[/url]

Разрешение на строительство — это государственный документ, предоставляемый полномочными инстанциями государственного аппарата или субъектного руководства, который предоставляет начать стройку или выполнение строительных операций.

[url=https://rns-50.ru/]Разрешение на место строительства[/url] определяет юридические основы и требования к строительным операциям, включая приемлемые типы работ, дозволенные материалы и техники, а также включает строительные инструкции и комплексы безопасности. Получение разрешения на строительство является обязательным документов для строительной сферы.

[url=http://propeciatabs.skin/]25 mg propecia[/url]

[url=http://trazodone.pics/]price of 100mg trazodone[/url]

[url=https://trazodone.pics/]trazodone pill 50mg[/url]

[url=http://avaltrex.com/]can you buy valtrex over the counter in australia[/url]

[url=https://imetformin.online/]metformin online usa no prescription[/url]

You’ve made some decent points there. I looked on the internet for more info about the issue and found most individuals will go along with your views on this website.

I’m now not sure where you are getting your info, however good topic. I needs to spend a while learning more or understanding more. Thank you for wonderful information I used to be looking for this information for my mission.

[url=http://fluconazole.pics/]diflucan india[/url]

[url=http://diflucan.business/]diflucan tablets buy online[/url]

[url=http://motilium.download/]motilium over the counter australia[/url]

[url=http://furosemide.science/]furosemide 40mg price[/url]

[url=https://metforminb.com/]metformin 3115 tablets[/url]

Transitioning from EMU to JMU for my graduate studies has been an insightful journey in 25 Estimate. EMU’s foundation has seamlessly transitioned to JMU, allowing me to deepen my understanding of estimating. Collaborating with diverse peers at JMU has expanded my perspective.

THCENTURY ILLUSTRATION OF A MAN WEARING A TOURNIQUETBLEEDING INTO A BOWL Bleeding bowl for collecting the patients blood when a lanceta needle or small doubleedged scalpelwas used for the procedure BLOODLETTING Tools of the trade Ventilation holes in the lid allowed the leeches to breathe The jar held water in which The use of leeches was widespread in the th centuryso much so that the high demand almost made the species extinct. ovartoohox buy priligy 30 mg x 10 pill how long do you stay erect with cialis kagmaabade

[url=https://bactrim.pics/]buy bactrim online without prescription[/url]

[url=https://diclofenac.party/]voltaren 2 cream[/url]

[url=http://toradol.party/]toradol 50 mg[/url]

[url=http://fluconazole.pics/]diflucan pill costs[/url]

[url=https://avaltrex.com/]valtrex over the counter canada[/url]

[url=http://diclofenac.party/]where to buy diclofenac gel[/url]

[url=http://propeciatabs.skin/]cheap propecia online canada[/url]

[url=https://trazodone.pics/]trazodone capsules[/url]

Hello there, just was alert to your blog thru Google, and located that it is truly informative. I?m gonna be careful for brussels. I?ll be grateful when you proceed this in future. Lots of people will probably be benefited out of your writing. Cheers!

Pleural mesothelioma, and that is the most common style and influences the area about the lungs, might result in shortness of breath, torso pains, along with a persistent cough, which may cause coughing up blood.

It’s difficult to find educated people on this topic, but you sound like you know what you’re talking about! Thanks

Nice blog here! Also your site loads up fast! What host are you using? Can I get your affiliate link to your host? I wish my site loaded up as fast as yours lol

[url=https://valtrex.monster/]valtrex for sale[/url]

[url=https://zoloft.monster/]200 mg zoloft price[/url]

[url=https://onlinepharmacy.monster/]compare pharmacy prices[/url]

[url=https://drugstore.monster/]online pet pharmacy[/url]

[url=https://valtrex.monster/]valtrex generic canada[/url]

[url=https://wellbutrin.monster/]wellbutrin from india[/url]

[url=https://drugstore.monster/]no prescription needed canadian pharmacy[/url]

[url=http://wellbutrin.monster/]zyban advantage pack[/url]

[url=https://zoloft.monster/]zoloft cost canada[/url]

[url=http://wellbutrin.monster/]600 mg wellbutrin[/url]

Asking questions are really good thing if you are not understanding anything entirely, except this post offers pleasant understanding even.

Быстровозводимые строения – это прогрессивные сооружения, которые различаются громадной скоростью возведения и гибкостью. Они представляют собой строения, образующиеся из предварительно созданных составляющих либо модулей, которые способны быть быстрыми темпами собраны на участке стройки.

[url=https://bystrovozvodimye-zdanija.ru/]Строительство помещений из сэндвич панелей[/url] располагают гибкостью а также адаптируемостью, что разрешает просто преобразовывать а также адаптировать их в соответствии с нуждами покупателя. Это экономически лучшее и экологически устойчивое решение, которое в крайние годы приняло маштабное распространение.

[url=http://finpecia.today/]propecia 1mg india[/url]

[url=https://wellbutrin.monster/]wellbutrin prices[/url]

Hey! I know this is kind of off topic but I was wondering if you knew where I could get a captcha plugin for my comment form? I’m using the same blog platform as yours and I’m having difficulty finding one? Thanks a lot!

[url=http://valtrex.monster/]how much is generic valtrex[/url]

[url=https://valtrex.monster/]buy valtrex online prescription[/url]

[url=https://wellbutrin.monster/]zyban 150 mg price[/url]

[url=http://finasteride.monster/]propecia price canada[/url]

[url=http://prednisolone.monster/]prednisolone 5 mg tablet without a prescription[/url]

[url=https://neurontin.monster/]neurontin 100mg tablets[/url]

[url=http://ivermectin.trade/]cheap stromectol[/url]

[url=https://ivermectin.party/]topical ivermectin cost[/url]

[url=https://finasteride.monster/]finasteride no prescription[/url]

I do agree with all the concepts you have presented on your post. They are very convincing and will definitely work. Still, the posts are too quick for newbies. May just you please extend them a bit from next time? Thank you for the post.

My brother suggested I might like this blog. He was totally right. This post actually made my day. You cann’t imagine just how much time I had spent for this information! Thanks!

[url=http://wellbutrin.monster/]where can i buy wellbutrin without prescription[/url]

It’s impressive that you are getting ideas from this piece of writing as well as from our argument made here.

[url=http://zoloft.monster/]zoloft 50 mg online[/url]

[url=http://zoloft.monster/]zoloft 125 mg[/url]

[url=http://ivermectin.trade/]buy ivermectin canada[/url]

[url=http://ivermectin.party/]can you buy stromectol over the counter[/url]

[url=https://neurontin.monster/]order neurontin online[/url]

We stumbled over here different web page and thought I might as well check things out. I like what I see so now i am following you. Look forward to looking over your web page yet again.

[url=http://propecia.monster/]purchase finasteride without a prescription[/url]

[url=http://neurontin.monster/]buy gabapentin 400 mg[/url]

[url=http://ivermectin.party/]ivermectin 4 mg[/url]

[url=http://ivermectin.trade/]stromectol coronavirus[/url]

[url=https://ivermectin.party/]ivermectin 3mg[/url]

[url=https://valtrex.monster/]valtrex generic prescription[/url]

[url=https://zoloft.monster/]average cost of generic zoloft[/url]

[url=https://finasteride.monster/]5mg finasteride[/url]

[url=https://drugstore.monster/]best online pet pharmacy[/url]

[url=https://ivermectin.party/]ivermectin 1 topical cream[/url]

[url=https://ivermectin.party/]ivermectin tablets order[/url]

Pretty section of content. I just stumbled upon your web site and in accession capital to assert that I acquire in fact enjoyed account your blog posts. Any way I’ll be subscribing to your augment and even I achievement you access consistently fast.

[url=http://propecia.monster/]how much is propecia in singapore[/url]

[url=http://drugstorepp.com/lisinopril.html]lisinopril 10 12.5 mg[/url]

Thank you for some other informative blog. Where else may just I am getting that kind of info written in such a perfect method? I have a challenge that I am simply now operating on, and I have been at the glance out for such information.

[url=https://sildenafil.live/kamagra.html]kamagra tablets uk[/url]

[url=http://pharmgf.com/]buy online pharmacy uk[/url]

[url=https://antibioticsop.com/terramycin.html]terramycin price[/url]

Wow, superb blog format! How long have you been blogging for? you make blogging glance easy. The full glance of your web site is wonderful, let alonewell as the content!

I go to see everyday some websites and information sites to read posts, but this webpage offers quality based content.

An impressive share! I have just forwarded this onto a coworker who had been doing a little research on this. And he in fact bought me lunch simply because I discovered it for him… lol. So let me reword this…. Thank YOU for the meal!! But yeah, thanx for spending time to discuss this matter here on your internet site.

[url=http://drugstorepp.com/synthroid.html]buy synthroid 150 mcg online[/url]

[url=https://sildenafil.live/silvitra.html]silvitra 120 mg pills[/url]

[url=https://pharmgf.com/zithromax.html]zithromax price singapore[/url]

[url=https://sildenafil.live/aurogra.html]aurogra 100 uk[/url]

Аренда инструмента предоставляет множество преимуществ, особенно для тех, кто редко использует специализированные инструменты или не хочет тратить деньги на их покупку. Вот несколько преимуществ аренды инструмента

аренда инструмента [url=prokat-59.ru]прокат инструмента в Перми[/url].

Компании, занимающиеся прокатом инструментов, обычно обновляют свой ассортимент и предлагают новейшие модели инструментов. Это позволяет арендаторам использовать самые современные инструменты, что может значительно повысить эффективность работы.

прокат инструмента в Перми [url=https://www.prokat-59.ru/]аренда инструмента в Перми[/url].

[url=http://medicinesaf.com/]maple leaf pharmacy in canada[/url]

[url=http://pharmgf.com/doxycycline.html]cheap doxy[/url]

[url=http://onlinepharmacy.best/modafinil.html]provigil daily use[/url]

[url=http://pharmgf.com/modafinil.html]modafinil europe buy[/url]

[url=https://drugstorepp.com/accutane.html]accutane discount[/url]

[url=http://drugstorepp.com/tadalafil.html]where to buy cheap cialis online[/url]

[url=http://happyfamilystoreonline.net/]canadian pharmacy no rx needed[/url]

[url=https://onlinepharmacy.best/albuterol.html]buying albuterol in mexico[/url]

[url=http://sildenafil.live/fildena.html]buy fildena india[/url]

[url=https://pharmgf.com/]cheapest pharmacy prescription drugs[/url]

[url=http://finasteride.science/fincar.html]fincar online[/url]

[url=https://pharmgf.com/albuterol.html]where can i get albuterol[/url]

[url=https://medicinesaf.com/lioresal.html]10 mg baclofen canada[/url]

[url=http://antibioticsop.com/vantin.html]cost of vantin 200 mg[/url]

[url=http://onlinepharmacy.best/lisinopril.html]lisinopril 200 mg[/url]

[url=http://medicinesaf.com/lioresal.html]baclofen australia[/url]

[url=http://drugstorepp.com/lisinopril.html]cost of lisinopril 5 mg[/url]

[url=http://pharmgf.com/dexamethasone.html]dexamethasone brand name australia[/url]

[url=https://pharmgf.com/clomid.html]clomid over the counter australia[/url]

Ahaa, its good discussion about this piece of writing here at this website, I have read all that, so now me also commenting here.

[url=https://medicinesaf.com/]safe online pharmacy[/url]

[url=http://pharmgf.com/lyrica.html]lyrica 500 mg tablet[/url]

[url=http://pharmgf.com/valtrex.html]buy valtrex online[/url]

[url=http://pharmgf.com/]online canadian pharmacy coupon[/url]

[url=https://pharmgf.com/baclofen.html]baclofen 75 mg[/url]

[url=http://pharmgf.com/metformin.html]where to get metformin[/url]

[url=https://trazodone.party/]oder trazadone for sleeping[/url]

[url=http://zanaflex.science/]tizanidine tablets[/url]

[url=https://diclofenac.trade/]voltaren gel online india[/url]

[url=https://valtrex.africa/]buying valtrex in mexico[/url]

It’s an remarkable piece of writing designed for all the web people; they will get benefit from it I am sure.

[url=https://aurogra.science/]aurogra 100 uk[/url]

Hurrah! At last I got a webpage from where I be able to truly take helpful information regarding my study and knowledge.

[url=https://zoloft.beauty/]online zoloft[/url]

I’m really enjoying the design and layout of your blog. It’s a very easy on the eyes which makes it much more enjoyable for me to come here and visit more often. Did you hire out a designer to create your theme? Great work!

[url=http://prednisonebu.online/]prednisone in canada[/url]

[url=https://femaleviagra.party/]viagra nyc[/url]

At this moment I am going away to do my breakfast, once having my breakfast coming yet again to read more news.

[url=https://effexor.party/]effexor xr 150mg[/url]

Very nice article, just what I wanted to find.

[url=http://happyfamilystoreonline.online/]happy family store pharmacy[/url]

[url=http://lasixg.online/]generic no prescription cheap furosemoide[/url]

You really make it seem so easy with your presentation however I in finding this topic to be really something which I feel I would never understand. It sort of feels too complicated and very large for me. I am taking a look forward in your next post, I will try to get the grasp of it!

[url=https://inderal.party/]propranolol buy online australia[/url]

[url=http://sildenafiltn.online/]viagra purchase in canada[/url]

[url=http://zanaflex.science/]tizanidine 4 mg price[/url]

[url=https://sildenafil.africa/]viagra in india price[/url]

[url=https://diclofenac.trade/]voltaren gel 50g price[/url]

I love it when people come together and share views. Great blog, continue the good work!

Разрешение на строительство – это законный документ, выдающийся государственными органами власти, который предоставляет возможность законное допуск на инициацию строительства, изменение, основной реабилитационный ремонт или разнообразные сорта строительной деятельности. Этот запись необходим для осуществления практически различных строительных и ремонтных работ, и его недостаток может повести к серьезными юридическими и денежными последствиями.

Зачем же нужно [url=https://xn--73-6kchjy.xn--p1ai/]порядок выдачи разрешений на строительство[/url]?

Правовая основа и надзор. Разрешение на строительство и реконструкцию – это средство обеспечения соблюдения правил и норм в процессе строительства. Это дает гарантийное соблюдение нормативов и законов.

Подробнее на [url=https://xn--73-6kchjy.xn--p1ai/]https://rns50.ru/[/url]

В финальном исходе, разрешение на строительство и монтаж является важным механизмом, ассигновывающим соблюдение законности, обеспечение безопасности и устойчивое развитие строительной сферы. Оно более того представляет собой неотъемлемым ходом для всех, кто собирается заниматься строительством или модернизацией недвижимости, и его наличие способствует укреплению прав и интересов всех сторон, участвующих в строительстве.

[url=http://ciproflxn.online/]cipro tendon[/url]

Разрешение на строительство – это правительственный документ, предоставляемый компетентными органами власти, который обеспечивает законное разрешение на инициацию строительных операций, модификацию, капитальный ремонт или другие типы строительной деятельности. Этот уведомление необходим для осуществления практически разнообразных строительных и ремонтных мероприятий, и его отсутствие может спровоцировать важными правовыми и финансовыми последствиями.

Зачем же нужно [url=https://xn--73-6kchjy.xn--p1ai/]выдача разрешения на строительство[/url]?

Правовая основа и надзор. Разрешение на строительство и реконструкцию объекта – это механизм ассигнования соблюдения правил и норм в процессе постройки. Удостоверение обеспечивает соблюдение правил и стандартов.

Подробнее на [url=https://xn--73-6kchjy.xn--p1ai/]www.rns50.ru[/url]

В конечном счете, разрешение на строительство объекта является ключевым методом, ассигновывающим законность, безопасность и устойчивое развитие строительства. Оно также представляет собой обязательным мероприятием для всех, кто собирается осуществлять строительство или модернизацию объектов недвижимости, и его наличие помогает укреплению прав и интересов всех участников, задействованных в строительной деятельности.

These are truly impressive ideas in about blogging. You have touched some pleasant points here. Any way keep up wrinting.

[url=https://budesonide.party/]budesonide 80[/url]

[url=https://valtrex.skin/]cheapest valtrex generic[/url]

[url=http://atomoxetine.party/]strattera 25 mg coupon[/url]

[url=http://accutanes.online/]accutane online[/url]

[url=https://prednisonebu.online/]prednisone 20 mg without prescription[/url]

[url=https://aflomax.online/]noroxin pill[/url]

[url=https://ivermectin.skin/]stromectol over the counter[/url]

[url=https://trazodone.trade/]250mg trazodone[/url]

[url=http://tadalafil.skin/]how to get cialis[/url]

[url=http://valtrex.africa/]can you buy valtrex over the counter in mexico[/url]

[url=https://zestoretic.science/]zestoretic 20 price[/url]

Hmm it appears like your site ate my first comment (it was extremely long) so I guess I’ll just sum it up what I submitted and say, I’m thoroughly enjoying your blog. I as well am an aspiring blog blogger but I’m still new to the whole thing. Do you have any points for rookie blog writers? I’d definitely appreciate it.

You have mentioned very interesting details! ps nice site. “Where can I find a man governed by reason instead of habits and urges” by Kahlil Gibran.

Скорозагружаемые здания: коммерческая выгода в каждом кирпиче!

В современной сфере, где время – деньги, быстровозводимые здания стали настоящим выходом для компаний. Эти современные сооружения включают в себя солидную надежность, финансовую эффективность и молниеносную установку, что обуславливает их оптимальным решением для различных бизнес-проектов.

[url=https://bystrovozvodimye-zdanija-moskva.ru/]Быстровозводимые здания[/url]

1. Скорость строительства: Минуты – важнейший фактор в бизнесе, и здания с высокой скоростью строительства позволяют существенно сократить сроки строительства. Это высоко оценивается в моменты, когда срочно требуется начать бизнес и начать зарабатывать.

2. Финансовая выгода: За счет оптимизации процессов производства элементов и сборки на месте, экономические затраты на моментальные строения часто бывает ниже, по сравнению с обычными строительными задачами. Это позволяет сократить затраты и обеспечить более высокий доход с инвестиций.

Подробнее на [url=https://xn--73-6kchjy.xn--p1ai/]https://www.scholding.ru/[/url]

В заключение, сооружения быстрого монтажа – это идеальное решение для бизнес-мероприятий. Они включают в себя эффективное строительство, финансовую выгоду и надежность, что придает им способность превосходным выбором для компаний, активно нацеленных на скорый старт бизнеса и гарантировать прибыль. Не упустите момент экономии времени и средств, наилучшие объекты быстрого возвода для ваших будущих проектов!

Экспресс-строения здания: финансовая польза в каждой детали!

В современной действительности, где время имеет значение, здания с высокой скоростью строительства стали реальным спасением для фирм. Эти новейшие строения сочетают в себе высокую надежность, экономичное использование ресурсов и быстроту установки, что придает им способность наилучшим вариантом для разнообразных предпринимательских инициатив.

[url=https://bystrovozvodimye-zdanija-moskva.ru/]Каркас быстровозводимого здания[/url]

1. Молниеносное строительство: Время – это самый важный ресурс в финансовой сфере, и экспресс-сооружения обеспечивают существенное уменьшение сроков стройки. Это преимущественно важно в моменты, когда актуально оперативно начать предпринимательство и начать получать прибыль.

2. Экономия средств: За счет улучшения производственных процедур элементов и сборки на объекте, финансовые издержки на быстровозводимые объекты часто снижается, по сопоставлению с обыденными строительными проектами. Это предоставляет шанс сократить издержки и получить более высокую рентабельность инвестиций.

Подробнее на [url=https://xn--73-6kchjy.xn--p1ai/]https://scholding.ru[/url]

В заключение, экспресс-конструкции – это превосходное решение для предпринимательских задач. Они сочетают в себе быстрое строительство, экономию средств и надежные характеристики, что обуславливает их первоклассным вариантом для предпринимателей, готовых к мгновенному началу бизнеса и получать деньги. Не упустите шанс на сокращение времени и издержек, лучшие скоростроительные строения для вашего предстоящего предприятия!

[url=https://advairp.online/]advair 2018 coupon[/url]

I really like it when people come together and share thoughts. Great website, keep it up!

[url=http://onlinepharmacy.party/]canadien pharmacies[/url]

Это лучшее онлайн-казино, где вы можете насладиться широким выбором игр и получить максимум удовольствия от игрового процесса.

[url=http://sertraline.science/]25g zoloft[/url]

[url=http://budesonide.download/]budesonide 3 mg price[/url]

Добро пожаловать на сайт онлайн казино, мы предлагаем уникальный опыт для любителей азартных игр.

Онлайн казино радует своих посетителей более чем двумя тысячами увлекательных игр от ведущих разработчиков.

[url=http://modafinil.party/]buy modafinil 100mg online[/url]

[url=http://trental.party/]trental 400 mg online india[/url]

В нашем онлайн казино вы найдете широкий спектр слотов и лайв игр, присоединяйтесь.

[url=https://orlistat.science/]orlistat 60 mg[/url]

Howdy would you mind letting me know which hosting company you’re utilizing? I’ve loaded your blog in 3 completely different web browsers and I must say this blog loads a lot quicker then most. Can you suggest a good internet hosting provider at a honest price? Thank you, I appreciate it!

[url=https://ivermectin.skin/]order stromectol[/url]

[url=https://lasixfd.online/]order furosemide online[/url]

[url=https://trazodone2023.online/]trazodone online canada[/url]

[url=https://doxycycline.africa/]doxycycline 150 mg cost[/url]

[url=https://happyfamilypharmacycanada.org/]canadian online pharmacy no prescription[/url]

[url=http://diflucan.media/]diflucan 150 mg online[/url]

[url=https://happyfamilyrx.org/]online pharmacy denmark[/url]

[url=https://modafinil.beauty/]buy modafinil paypal[/url]

Wonderful site. A lot of useful information here. I’m sending it to some pals ans also sharing in delicious. And naturally, thank you in your effort!

Скоростроительные здания: коммерческий результат в каждом строительном блоке!

В современном мире, где время имеет значение, объекты быстрого возвода стали реальным спасением для предпринимательства. Эти инновационные конструкции комбинируют в себе повышенную прочность, экономическую эффективность и мгновенную сборку, что придает им способность первоклассным вариантом для различных коммерческих проектов.

[url=https://bystrovozvodimye-zdanija-moskva.ru/]Строительство быстровозводимых зданий под ключ цена[/url]

1. Быстрое возведение: Моменты – наиважнейший аспект в коммерческой деятельности, и сооружения моментального монтажа позволяют существенно сократить сроки строительства. Это особенно ценно в ситуациях, когда срочно нужно начать бизнес и начать прибыльное ведение бизнеса.

2. Экономия средств: За счет совершенствования производственных операций по изготовлению элементов и монтажу на площадке, бюджет на сооружения быстрого монтажа часто бывает менее, по сопоставлению с традиционными строительными задачами. Это способствует сбережению денежных ресурсов и достичь большей доходности инвестиций.

Подробнее на [url=https://xn--73-6kchjy.xn--p1ai/]https://scholding.ru/[/url]

В заключение, объекты быстрого возвода – это великолепное решение для коммерческих инициатив. Они сочетают в себе быстроту монтажа, эффективное использование ресурсов и твердость, что придает им способность оптимальным решением для предпринимательских начинаний, готовых начать прибыльное дело и извлекать прибыль. Не упустите возможность сократить затраты и время, прекрасно себя показавшие быстровозводимые сооружения для вашего следующего проекта!

[url=http://doxycyclinev.online/]cheap doxycycline tablets[/url]

[url=http://hydroxychloroquine.trade/]hydroxychloroquine sulfate 200mg[/url]

Быстромонтажные здания: прибыль для бизнеса в каждой составляющей!

В сегодняшнем обществе, где время равно деньгам, скоростройки стали истинным спасением для коммерческой деятельности. Эти современные конструкции обладают солидную надежность, экономичное использование ресурсов и скорость монтажа, что придает им способность лучшим выбором для бизнес-проектов разных масштабов.

[url=https://bystrovozvodimye-zdanija-moskva.ru/]Легковозводимые здания из металлоконструкций цена[/url]

1. Молниеносное строительство: Моменты – наиважнейший аспект в коммерческой деятельности, и скоро возводимые строения позволяют существенно сократить сроки строительства. Это особенно выгодно в условиях, когда актуально оперативно начать предпринимательство и начать извлекать прибыль.

2. Бюджетность: За счет совершенствования производственных операций по изготовлению элементов и монтажу на площадке, расходы на скоростройки часто остается меньше, по отношению к традиционным строительным проектам. Это позволяет сэкономить средства и достичь более высокой инвестиционной доходности.

Подробнее на [url=https://xn--73-6kchjy.xn--p1ai/]https://scholding.ru/[/url]

В заключение, скоростроительные сооружения – это оптимальное решение для бизнес-проектов. Они обладают ускоренную установку, экономичность и устойчивость, что сделало их идеальным выбором для деловых лиц, стремящихся оперативно начать предпринимательскую деятельность и получать прибыль. Не упустите шанс на сокращение времени и издержек, прекрасно себя показавшие быстровозводимые сооружения для ваших будущих инициатив!

I simply could not depart your web site prior to suggesting that I extremely enjoyed the standard information a person supply in your visitors? Is going to be back incessantly in order to inspect new posts

[url=http://synthroid.science/]buy generic synthroid[/url]

[url=https://atretinoin.com/]uk tretinoin[/url]

[url=https://synthroid.africa/]synthroid 137 price[/url]

[url=https://retinoa.science/]retino 0.025[/url]

[url=http://happyfamilypharmacycanada.org/]canadian pharmacy ltd[/url]

[url=http://zithromaxabio.online/]azithromycin 25 mg[/url]

[url=https://ilyrica.online/]medication lyrica 50 mg[/url]

[url=https://citalopram.download/]citalopram tab 20mg[/url]

[url=https://effexor.science/]375 effexor[/url]

[url=http://lisinoprill.online/]lisinopril 5mg tablets[/url]

Pretty great post. I simply stumbled upon your blog and wanted to mention that I have really enjoyed browsing your blog posts. In any case I’ll be subscribing on your feed and I am hoping you write again soon!

[url=https://atretinoin.com/]retin a india online[/url]

[url=http://ifinasteride.online/]where to get propecia in canada[/url]

[url=https://advairmds.online/]advair hfa[/url]

[url=http://roaccutane.online/]order accutane online uk[/url]

[url=http://doxycicline.online/]doxycycline over the counter nz[/url]

[url=https://ciprofx.online/]cipro 1000 mg[/url]

[url=http://modafiniln.online/]where to buy modafinil online[/url]

[url=https://metforminglk.online/]metformin tablets where to buy[/url]

[url=http://doxycicline.online/]where can i get doxycycline pills[/url]

Высокая надежность: Релейные стабилизаторы обычно имеют простую конструкцию, что делает их надежными и долговечными.

электрический стабилизатор [url=http://www.stabilizatory-napryazheniya-1.ru/]http://www.stabilizatory-napryazheniya-1.ru/[/url].

Постоянные перепады напряжения и искажения могут привести к ускоренному старению электрических компонентов и оборудования. Это может сократить срок службы оборудования и требовать более частой замены его частей или даже полной замены.

стабилизатор напряжения ресанта 10000вт цена [url=https://www.stabilizatory-napryazheniya-1.ru]https://www.stabilizatory-napryazheniya-1.ru[/url].

[url=https://happyfamilypharmacycanada.org/]legit online pharmacy[/url]

[url=https://synthroid.science/]synthroid brand coupon[/url]

[url=https://clonidine.party/]where to buy clonidine[/url]

[url=https://azitromycin.online/]can i buy azithromycin over the counter in us[/url]

Выбирайте прочный стабилизатор напряжения для истинного спокойствия

стабилизаторы напряжения http://stabrov.ru.

[url=https://happyfamilyrx.org/]happy family rx[/url]

Прогон сайта с использованием программы “Хрумер” – это способ автоматизированного продвижения ресурса в поисковых системах. Этот софт позволяет оптимизировать сайт с точки зрения SEO, повышая его видимость и рейтинг в выдаче поисковых систем.

Хрумер способен выполнять множество задач, таких как автоматическое размещение комментариев, создание форумных постов, а также генерацию большого количества обратных ссылок. Эти методы могут привести к быстрому увеличению посещаемости сайта, однако их надо использовать осторожно, так как неправильное применение может привести к санкциям со стороны поисковых систем.

[url=https://kwork.ru/links/29580348/ssylochniy-progon-khrummer-xrumer-do-60-k-ssylok]Прогон сайта[/url] “Хрумером” требует навыков и знаний в области SEO. Важно помнить, что качество контента и органичность ссылок играют важную роль в ранжировании. Применение Хрумера должно быть частью комплексной стратегии продвижения, а не единственным методом.

Важно также следить за изменениями в алгоритмах поисковых систем, чтобы адаптировать свою стратегию к новым требованиям. В итоге, прогон сайта “Хрумером” может быть полезным инструментом для SEO, но его использование должно быть осмотрительным и в соответствии с лучшими практиками.

[url=https://zanaflex.trade/]zanaflex 4 mg capsule[/url]

[url=https://happyfamilypharmacycanada.org/]pharmacy discount card[/url]

[url=https://vermoxmd.online/]vermox australia[/url]

[url=http://citalopram.download/]citalopram 5 mg uk[/url]

[url=http://happyfamilystorepharmacy.net/]discount pharmacy online[/url]

[url=http://modafinilhr.online/]how to buy modafinil in usa[/url]

[url=https://avodart.party/]avodart 05mg[/url]

[url=https://tizanidine.download/]tizanidine over the counter[/url]

[url=http://dexamethasonen.online/]dexamethasone 0.1[/url]

[url=https://lasixni.online/]buy lasix online cheap[/url]

[url=http://retinoa.science/]retino cream price[/url]

[url=http://happyfamilystorepharmacy.net/]online pharmacy without insurance[/url]

[url=https://inderal.science/]inderal 60 mg drug[/url]

Hello! I’ve been following your site for a while now and finally got the bravery to go ahead and give you a shout out from Huffman Tx! Just wanted to tell you keep up the great job!

[url=https://lisinoprill.online/]lisinopril price comparison[/url]

[url=http://tretinoincream.skin/]tretinoin prescription[/url]

[url=http://domperidone.science/]motilium over the counter uk[/url]

[url=https://lisinoprill.online/]zestoretic online[/url]

[url=https://roaccutane.online/]where can i get accutane[/url]

[url=http://diflucanbsn.online/]can you buy diflucan over the counter in canada[/url]

hi!,I love your writing so so much! proportion we communicate more approximately your post on AOL? I need an expert in this space to unravel my problem. May be that is you! Looking forward to see you.

[url=http://familystorerx.org/]canada pharmacy online[/url]

[url=https://ciprofx.online/]buy ciprofloxacin 500mg online[/url]

[url=http://nolvadex.science/]nolvadex 20mg india[/url]

[url=https://tetracycline.party/]terramycin for dogs[/url]

[url=http://erythromycin.directory/]erythromycin cream buy online[/url]

[url=https://retina.business/]retin a 25 cream[/url]

[url=http://nolvadex.party/]40 mg tamoxifen[/url]

[url=http://acyclovirus.com/]acyclovir pills uk[/url]

[url=http://diflucanb.online/]diflucan singapore[/url]

[url=https://synthroid.media/]synthroid 137.5 mcg[/url]

[url=http://promethazine.party/]phenergan generic cost[/url]

[url=http://ciproe.online/]ciprofloxacin canadian pharmacy[/url]

[url=https://suhagra.science/]suhagra 100 from india[/url]

[url=https://lasixd.online/]cost of furosemide uk[/url]

[url=https://elimite.party/]generic elimite cream price[/url]

[url=http://modafinilr.online/]buy modafinil uk paypal[/url]

Ищете надежного подрядчика для устройства стяжки пола в Москве? Обращайтесь к нам на сайт styazhka-pola24.ru! Мы предлагаем услуги по залитию стяжки пола любой сложности и площади, а также гарантируем быстрое и качественное выполнение работ.

[url=https://clomidsale.online/]cheap clomid tablets uk[/url]

[url=http://felomax.online/]flomax 21339[/url]

[url=https://retinacream.skin/]cheap tretinoin cream[/url]

[url=http://avodart.directory/]avodart 5[/url]

[url=http://clomidsale.online/]where can i get clomid uk[/url]

строительство снабжение

[url=https://motilium.science/]motilium over the counter[/url]

[url=https://chloroquine.science/]chloroquine brand[/url]

[url=https://acyclovirus.com/]order acyclovir online no prescription[/url]

[url=https://accutaneo.online/]accutane prescription australia[/url]

[url=https://diflucanb.online/]diflucan otc usa[/url]

[url=https://vardenafil.science/]buy vardenafil 10mg[/url]

Наши цехи предлагают вам шанс воплотить в жизнь ваши самые авантюрные и изобретательные идеи в сфере внутреннего дизайна. Мы практикуем на изготовлении занавесей со складками под по заказу, которые не только делают вашему дому неповторимый стиль, но и выделяют вашу особенность.

Наши [url=https://tulpan-pmr.ru]тканевые шторы плиссе[/url] – это симфония роскоши и практичности. Они создают поилку, фильтруют сияние и консервируют вашу приватность. Выберите материал, оттенок и декор, и мы с с удовольствием произведем текстильные шторы, которые точно подчеркнут специфику вашего декорирования.

Не стесняйтесь типовыми решениями. Вместе с нами, вы сможете сформировать текстильные занавеси, которые будут соответствовать с вашим неповторимым вкусом. Доверьтесь нам, и ваш резиденция станет местом, где каждый компонент говорит о вашу особенность.

Подробнее на [url=https://tulpan-pmr.ru]портале[/url].

Закажите гардины со складками у нас, и ваш дом переменится в рай стиля и комфорта. Обращайтесь к нам, и мы содействуем вам воплотить в жизнь личные грезы о превосходном внутреннем дизайне.

Создайте свою собственную сказку дизайна с нашей командой. Откройте мир перспектив с портьерами плиссе под по заказу!

[url=http://amoxil.directory/]where can you buy amoxicillin[/url]

Наши производства предлагают вам перспективу воплотить в жизнь ваши самые рискованные и оригинальные идеи в секторе домашнего дизайна. Мы занимаемся на изготовлении занавесей со складками под по вашему заказу, которые не только подчеркивают вашему жилью неповторимый стиль, но и подсвечивают вашу уникальность.

Наши [url=https://tulpan-pmr.ru]купить плиссе от производителя[/url] – это гармония изысканности и функциональности. Они создают атмосферу, фильтруют люминесценцию и поддерживают вашу конфиденциальность. Выберите материал, тон и отделка, и мы с с радостью произведем гардины, которые именно подчеркнут особенность вашего внутреннего дизайна.

Не сдерживайтесь типовыми решениями. Вместе с нами, вы будете способны разработать гардины, которые будут соответствовать с вашим собственным вкусом. Доверьтесь нашей бригаде, и ваш дворец станет помещением, где всякий компонент проявляет вашу уникальность.

Подробнее на [url=https://tulpan-pmr.ru]портале sun-interio1.ru[/url].

Закажите текстильные занавеси со складками у нас, и ваш съемное жилье преобразится в сад стиля и комфорта. Обращайтесь к нашей команде, и мы предоставим помощь вам воплотить в жизнь ваши собственные грезы о идеальном оформлении.

Создайте свою собственную индивидуальную рассказ интерьера с нашей командой. Откройте мир перспектив с текстильными шторами плиссе под индивидуальный заказ!

Хотите получить идеально ровные стены в своей квартире или офисе? Обращайтесь к профессионалам на сайте mehanizirovannaya-shtukaturka-moscow.ru! Мы предоставляем услуги по механизированной штукатурке стен в Москве и области, а также гарантируем быстрое и качественное выполнение работ.

[url=https://lyricagmb.online/]price of lyrica 75 mg in india[/url]

[url=https://dipyridamole.science/]buy dipyridamole[/url]

[url=https://finpecia.skin/]finasteride pills for sale[/url]

[url=https://retina.business/]tretinoin india[/url]

[url=http://colchicine.online/]colchicine 6 mg tablet[/url]

[url=http://motilium.science/]motilium for breastfeeding[/url]

[url=http://chloroquine.science/]chloroquine 250 mg tablets[/url]

[url=http://aaccutane.online/]where to get accutane[/url]

[url=http://baclanofen.com/]40 mg baclofen[/url]

[url=http://prednison.online/]brand prednisone[/url]

[url=http://avodart.directory/]avodart medication generic[/url]

[url=https://retina.business/]how can i get retin a[/url]

[url=https://abamoxicillin.online/]generic for augmentin[/url]

[url=http://azithromycinq.com/]azithromycin capsules[/url]

[url=https://lasixd.online/]lasix 20 mg price[/url]

[url=http://vardenafil.science/]vardenafil tablets 20 mg[/url]

[url=http://ciprok.online/]buy ciprofloxacin 500mg online uk[/url]

Oh my goodness! Incredible article dude! Thank you, However I am experiencing difficulties with your RSS. I don’t know why I can’t subscribe to it. Is there anybody else getting the same RSS problems? Anybody who knows the solution will you kindly respond? Thanx!!

[url=https://metforminb.online/]metformin singapore[/url]

[url=https://zithromax.party/]buy cheap zithromax[/url]

[url=http://synthroid.media/]synthroid 1 mg[/url]

[url=https://lisinoprilc.online/]lisinopril 20 mg tab price[/url]

Asking questions are really good thing if you are not understanding anything fully, but this piece of writing provides nice understanding even.

[url=http://nolvadex.party/]nolvadex d[/url]

[url=http://prednison.online/]buy deltasone no prescription[/url]

[url=http://baclanofen.com/]baclofen rx[/url]

[url=https://prednisolone.party/]buy prednisolone online[/url]

[url=https://silagra.pics/]buy silagra india[/url]

[url=https://aaccutane.online/]buy accutane online[/url]

[url=https://cafergot.science/]cafergot for sale[/url]

[url=http://promethazine.party/]phenergan lowest cost[/url]

[url=https://lasixb.online/]furosemide 20 mg pill[/url]

[url=https://lasixb.online/]lasix tabs[/url]

[url=https://hydrochlorothiazide.science/]hydrochlorothiazide purchase online[/url]

[url=https://baclanofen.com/]lioresal buy online[/url]

Good post. I’m going through a few of these issues as well..

[url=https://azithromycin.party/]buy zithromax online fast shipping[/url]

[url=http://levitra.best/]cost levitra 20mg[/url]

Its like you read my mind! You seem to understand so much approximately this, like you wrote the guide in it or something. I feel that you could do with some p.c. to drive the message house a bit, however other than that, this is great blog. A great read. I’ll definitely be back.

It’s very straightforward to find out any topic on net as compared to books, as I found this article at this website.

[url=https://synthroid.media/]synthroid mexico[/url]

[url=http://doxycicline.com/]doxycycline price 100mg[/url]

Обеспечьте своему жилищу идеальные стены с механизированной штукатуркой. Выберите надежное решение на mehanizirovannaya-shtukaturka-moscow.ru.

[url=http://synthroidb.online/]synthroid 200 mcg tablet[/url]

[url=https://glucophage.company/]order metformin online uk[/url]

[url=http://amoxil.directory/]cheap amoxil online[/url]

[url=https://lyricadp.online/]lyrica capsules 75 mg cost[/url]

[url=http://amoxicillinf.online/]amoxicillin 500mg capsules uk[/url]

[url=https://azithromycinq.online/]buy azithromycin without prescription[/url]

[url=https://finasteridepb.online/]propecia generic price[/url]

[url=http://azithromycini.online/]zithromax cost canada[/url]

[url=http://diflucanld.online/]diflucan 50 mg tablet[/url]

[url=http://prednisome.online/]can you buy prednisone[/url]

[url=https://ovaltrex.com/]buy valtrex[/url]

[url=http://albuterolhl.online/]combivent buy online[/url]

[url=http://synthroidt.online/]synthroid 120 mcg[/url]

Стабилизаторы напряжения Rеsanta обеспечивают защиту от перенапряжения, что позволяет предотвратить повреждение электронных устройств, вызванное скачками напряжения.

стабилизатор напряжения 220в для дома ресанта 10квт [url=https://www.stabilizatory-napryazheniya-1.ru/]https://www.stabilizatory-napryazheniya-1.ru/[/url].

[url=https://predonisone.online/]prednisone 10 mg tablet[/url]

[url=http://accutanelb.online/]accutane 10mg price[/url]

[url=https://prednisonee.online/]deltasone costs[/url]

[url=https://amoxicillinf.online/]augmentin 375mg[/url]

[url=https://accutanelb.online/]cost of accutane[/url]

[url=https://ovaltrex.online/]can i buy valtrex without prescription[/url]

[url=http://azithromycini.online/]where can i buy zithromax uk[/url]

[url=https://synthroidt.online/]synthroid 112 mcg tablet[/url]

[url=https://ovaltrex.com/]valtrex without a prescription[/url]

[url=http://happyfamilyrxstorecanada.com/]family care rx pharmacy[/url]

Howdy! This is kind of off topic but I need some advice from an established blog. Is it hard to set up your own blog? I’m not very techincal but I can figure things out pretty fast. I’m thinking about creating my own but I’m not sure where to start. Do you have any tips or suggestions? Thanks

[url=http://azithromycini.online/]azithromycin z-pack[/url]

[url=https://accutanelb.online/]buy accutane online 30mg[/url]

Медленное время отклика: Релейные стабилизаторы обычно имеют более длительное время отклика на изменения напряжения, поэтому они не всегда подходят для устройств, требующих мгновенной стабилизации.

купить однофазный стабилизатор напряжения 10 квт [url=https://www.stabilizatory-napryazheniya-1.ru]https://www.stabilizatory-napryazheniya-1.ru[/url].

[url=https://prednisome.online/]buy prednisone online no prescription[/url]

[url=http://diflucanld.online/]can you purchase diflucan over the counter[/url]

[url=https://diflucanld.online/]where can i get diflucan[/url]

[url=http://valtarex.com/]by valtrex online[/url]

Hey! I realize this is kind of off-topic but I had to ask. Does operating a well-established blog like yours take a lot of work? I’m completely new to operating a blog but I do write in my diary daily. I’d like to start a blog so I can share my experience and views online. Please let me know if you have any ideas or tips for new aspiring bloggers. Appreciate it!

[url=http://suhagraxs.online/]suhagra tablet price[/url]

[url=https://acqutane.online/]accutane 10 mg discount[/url]

[url=https://metforminex.online/]metformin 1000mg without prescription[/url]

[url=https://metforminex.online/]buy metformin 850 mg[/url]

[url=https://suhagraxs.online/]suhagra 100 buy[/url]

[url=https://elimite.skin/]order elimite online[/url]

[url=https://metforminzb.online/]order metformin 500 mg online[/url]

[url=http://acqutane.online/]order accutane online canada[/url]

[url=https://permethrin.skin/]where to buy elimite cream over the counter[/url]

[url=https://azithromycini.online/]buy zithromax online usa[/url]

[url=http://baclofendl.online/]10mg baclofen tablet price[/url]

[url=http://elimite.skin/]elimite price[/url]

[url=http://retinagel.skin/]tretinoin 025 cream coupon[/url]

[url=http://aprednisone.online/]canadian pharmacy 5 mg prednisone no rx[/url]

[url=http://baclofendl.online/]baclofen tabs 10mg[/url]

[url=https://ilasix.online/]best over the counter lasix[/url]

[url=https://ibaclofeno.online/]10mg baclofen tablet[/url]

[url=https://azithromycinq.online/]azithromycin cheap online[/url]

[url=https://valtrexbc.online/]buy valrex online[/url]

[url=https://iprednisone.online/]prednisone for sale online[/url]

[url=http://doxycyclinedsp.online/]doxycycline buy usa[/url]

[url=http://lisinoprilrm.online/]lisinopril 10 mg for sale[/url]

I all the time used to read post in news papers but now as I am a user of web thus from now I am using net for posts, thanks to web.

[url=http://finasteridel.online/]propecia india buy[/url]

[url=https://accutanelb.online/]can you buy accutane over the counter in canada[/url]

[url=http://lisinoprilhc.online/]zestril 25 mg[/url]

Hi there Dear, are you in fact visiting this site daily, if so then you will absolutely get good experience.

[url=http://diflucanv.com/]buy diflucan medicarions[/url]

[url=https://lyricatb.online/]900mg lyrica[/url]

[url=https://prednisonee.online/]prednisone for sale no prescription[/url]

[url=https://aprednisone.online/]prednisone buy[/url]

[url=http://alisinopril.online/]zestril canada[/url]

[url=http://esynthroid.online/]synthroid 100 pill[/url]

[url=http://valtrexbc.online/]how can i get valtrex[/url]

[url=https://alisinopril.online/]2 lisinopril[/url]

[url=https://albuterolhi.online/]where to buy albuterol australia[/url]

[url=http://alisinopril.online/]lisinopril mexico[/url]

[url=http://dexamethasonelt.online/]where can i buy dexamethasone[/url]

Если вы собираетесь купить новый инструмент, прокат может стать отличной возможностью его протестировать перед покупкой. Вы сможете оценить его качество, функциональность и соответствие ваших требований. Если инструмент устроит вас, вы всегда можете купить его после аренды.

магазин проката [url=https://prokat888.ru]https://prokat888.ru[/url].

[url=http://clomip.com/]clomid generic south africa[/url]

[url=https://accutanelb.online/]accutane prescription uk[/url]

[url=http://albuterolhi.online/]buy ventolin no prescription[/url]

[url=http://valtrexm.online/]valtrex cost[/url]

[url=http://diflucanld.online/]diflucan pill costs[/url]

[url=https://predonisone.online/]where to buy prednisone in australia[/url]

[url=http://prednisonee.online/]prednisolone prednisone[/url]

[url=https://happyfamilyrxstorecanada.com/]canadian pharmacy 24[/url]

[url=https://synthroidcr.online/]synthroid 0.2 mg[/url]

[url=http://ilasix.online/]fureosinomide[/url]

[url=http://doxycyclinedsp.online/]cost of doxycycline[/url]

[url=http://acqutane.online/]how to get accutane australia[/url]

[url=http://diflucanld.online/]diflucan 150 mg caps[/url]

[url=https://clomip.com/]clomid 100 mg tablet[/url]

[url=https://synthroidt.online/]synthroid tablets for sale[/url]

[url=http://accutanelb.online/]generic accutane cost[/url]

[url=https://baclofendl.online/]baclofen 25 mg india[/url]

[url=https://metforminex.online/]glucophage 850 mg price[/url]

[url=https://synthroidt.online/]online pharmacy no script synthroid 75[/url]

[url=http://metforminzb.online/]discount glucophage[/url]

[url=https://metforminex.online/]metformin 1500[/url]

[url=http://prednisonee.online/]prednisone 10 mg daily[/url]

[url=http://diflucanv.online/]diflucan pill canada[/url]

[url=https://lisinoprilhc.online/]lisinopril 2.5 mg[/url]

[url=https://baclofendl.online/]baclofen 10 mg price in india[/url]

[url=https://prednisonefn.online/]prednisone 5mg price[/url]

[url=http://baclofendl.online/]baclofen prescription uk[/url]

Very nice article, exactly what I needed.

My brother suggested I might like this website. He used to be totally right. This publish actually made my day. You cann’t believe just how much time I had spent for this information! Thank you!

[url=http://finasteridel.online/]finasteride cream[/url]

[url=http://finasteridel.online/]finasteride how to get[/url]

[url=https://predonisone.online/]prednisone 10mg tabs[/url]

[url=http://permethrin.skin/]elimite cream otc[/url]

Hey there, I think your blog might be having browser compatibility issues. When I look at your blog in Ie, it looks fine but when opening in Internet Explorer, it has some overlapping. I just wanted to give you a quick heads up! Other then that, fantastic blog!

[url=https://albuterolhl.online/]albuterol capsules[/url]

[url=http://diflucanv.com/]where can i buy diflucan 1[/url]

[url=http://diflucanld.online/]diflucan 100mg[/url]

[url=http://metforminex.online/]glucophage no prescription[/url]

[url=https://diflucanld.online/]how to buy diflucan online[/url]

[url=https://accutanelb.online/]accutane best price[/url]

This article provides clear idea for the new users of blogging, that truly how to do blogging and site-building.

[url=https://albuterolhl.online/]cheap combivent[/url]

[url=http://iprednisone.online/]average cost of prednisone[/url]

[url=http://suhagraxs.online/]suhagra 100mg price canadian pharmacy[/url]

[url=http://doxycyclinedsp.online/]buy generic doxycycline[/url]

[url=http://lyricadp.online/]cost of lyrica medication[/url]

[url=https://iprednisone.online/]prednisone online[/url]

[url=https://lyricanx.online/]buy lyrica mexico[/url]

[url=https://baclofendl.online/]lioresal 10 mg price[/url]

[url=http://lyricatb.online/]buy lyrica online canada[/url]

[url=http://prednisonefn.online/]prednisone cost canada[/url]

Hi there! Do you know if they make any plugins to protect against hackers? I’m kinda paranoid about losing everything I’ve worked hard on. Any recommendations?

[url=http://lisinoprilrm.online/]lisinopril 20 mg tablet cost[/url]

[url=http://baclofendl.online/]baclofen 025[/url]

[url=http://azithromycini.online/]order zithromax[/url]

[url=https://clomip.com/]how can i get clomid online[/url]

[url=https://lisinoprilas.online/]prinivil brand name[/url]

[url=https://esynthroid.online/]synthroid 100mcg tab[/url]

[url=https://lyricanx.online/]lyrica 100 mg[/url]

[url=https://albuterolhi.online/]buy albuterol online india[/url]

[url=http://valtrexbc.online/]valtrex 1000 mg price in india[/url]

[url=https://vermoxpr.online/]vermox tablets buy online[/url]

Срочный прокат инструмента в название населенного пункта с инструментом без лишних трат доступны современные модели электроинструментов решение для ремонтных работ инструмент доступно в нашем сервисе

Прокат инструмента для выполнения задач

Сберегайте деньги на покупке инструмента с нашими услугами проката

Отличный выбор для ремонта автомобиля нет инструмента? Не проблема, в нашу компанию за инструментом!

Улучшите результаты с качественным инструментом из нашего сервиса

Аренда инструмента для садовых работ

Не расходуйте деньги на покупку инструмента, а арендуйте инструмент для разнообразных задач в наличии

Разнообразие инструмента для осуществления различных работ

Профессиональная помощь в выборе нужного инструмента от опытных нашей компании

Будьте в плюсе с услугами Аренда инструмента для строительства дома или квартиры

Наша команда – лучший партнер в аренде инструмента

Профессиональный инструмент для ремонтных работ – в нашем прокате инструмента

Не знаете, какой инструмент подойдет? Мы поможем с подбором инструмента в нашем сервисе.

прокат аренда без залога [url=http://www.meteor-perm.ru/]http://www.meteor-perm.ru/[/url].

Срочный прокат инструмента в городском округе с инструментом без дополнительных расходов доступны современные модели Удобное решение для строительных работ инструмент выгодно в нашем сервисе

Аренда инструмента для вашего задач

Сберегайте деньги на покупке инструмента с нашими услугами проката

Отличный выбор для У вашей компании нет инструмента? Не проблема, на наш сервис за инструментом!

Улучшите результаты с качественным инструментом из нашего сервиса

Арендовать инструмента для ремонтных работ

Не тяните деньги на покупку инструмента, а воспользуйтесь арендой инструмент для любых задач в наличии

Широкий ассортимент инструмента для проведения различных работ

Качественная помощь в выборе нужного инструмента от опытных нашей компании

Будьте в плюсе с услугами Аренда инструмента для строительства дома или квартиры

Наша команда – надежный партнер в аренде инструмента

Профессиональный инструмент для ремонтных работ – в нашем прокате инструмента

Не можете определиться с выбором? Мы поможем с подбором инструмента в нашем сервисе.

аренда строительного электроинструмента [url=http://meteor-perm.ru/]http://meteor-perm.ru/[/url].

Thanks for your marvelous posting! I seriously enjoyed reading it, you could be a great author. I will be sure to bookmark your blog and will eventually come back from now on. I want to encourage continue your great posts, have a nice weekend!

[url=https://azithromycinq.online/]zithromax singapore[/url]

[url=http://esynthroid.online/]synthroid 10 mcg[/url]

[url=http://diflucanld.online/]diflucan pill canada[/url]

[url=http://lisinoprilas.online/]lisinopril 20mg discount[/url]

[url=https://silagra.cyou/]silagra 25 mg price[/url]

After checking out a few of the blog articles on your site, I honestly like your way of blogging. I saved it to my bookmark site list and will be checking back soon. Please check out my web site as well and let me know how you feel.

[url=http://ivermectin.guru/]stromectol generic[/url]

[url=https://azithromycin.digital/]azithromycin otc[/url]

[url=http://bactrim.cyou/]bactrim 875 mg[/url]

[url=https://amoxil.cfd/]augmentin 375 mg price india[/url]

[url=http://synthroid.cyou/]synthroid 100 pill[/url]

[url=https://vardenafil.directory/]brand levitra[/url]

fantastic points altogether, you simply gained a brand new reader. What would you suggest about your post that you made a few days ago? Any positive?

[url=http://augmentin.best/]amoxicillin coupon[/url]

What’s up to all, how is everything, I think every one is getting more from this website, and your views are pleasant designed for new users.

[url=http://gabapentin.cfd/]gabapentin cost[/url]